This is a guest post by Cameron Laird

Companies want to provide quality but must also balance development timelines, market demands, and more to ship new features as quickly as possible. Building QA into your SDLC and ensuring thorough testing is the key to delivering quality every time. However, if your organization sees QA as an afterthought or a bottleneck in your team’s software development lifecycle (SDLC)—or even viewed as a single step in your SDLC—then most likely, your product will not be well-tested and QA will be seen as a barrier to releases.

In our last post, DevOps Testing Culture: How to Build Quality Throughout the SDLC, we discussed how to start building QA into your SDLC. This post will discuss the top five most common mistakes to avoid when building quality throughout the SDLC.

Positive goals inspire us. At the same time, it’s good practice to learn a few “bad smells” or warning signals and what to do when they turn up. Here are the five most common mistakes that QA teams make and how you can step around them to safety.

Testing Without Well-Defined Requirements or User Stories

Sometimes the first and perhaps most significant step toward a healthy QA department is to stop. Do nothing. Take a break. Run no tests at all until the requirements for the software assigned for testing themselves pass your quality threshold.

Organizations fall into the habit of leaving software on QA’s metaphoric doorstep, assuming that QA can reverse-engineer, mind-read, and speculate the value that software represents for customers. Stop that in any of its forms. Insist that QA continually tests to an explicit, written target. Creativity is a beautiful thing, but that’s the wrong place. Any effort your staff makes to figure out what the software should do interferes with their ability to validate what it does. Accommodating muddy or vague requirements isn’t “nice”: it bleeds strength away from where it needs to be.

Will a change like this challenge Product Management? Perhaps; the best application of QA creativity is helping Product Management charter good requirements. To help write them in the first place is ideal. Then you’ll know what they are and that they’re testable and aligned with user stories. When writing requirements before product definition, you can test the requirements themselves and verify their completeness, clarity, concision, consistency, and coherence.

Not all situations are ideal. However, during the transition, you might be required to practice QA on products you didn’t help specify. Whatever happened before, though, ensure that QA isn’t expected to guess about requirements. Requirements that somehow turn up only after the software has already been built are a symptom of deeper problems.

Those around you might judge you based on your knowledge of testing frameworks or how many bugs your testers find. However, you multiply your value by shifting how your organization operates towards clear, sensible goals constructed collaboratively with the end-user in mind. To stop testing until requirements are done right, or at least until you’ve achieved clarity, is a tremendous accomplishment and one for which you should aim.

How do you test requirements once you receive them? That’s a big subject, one mainly beyond the scope of this introduction. For now, base it on your experience: you know that subjective labels like “fast” need to be quantified. You know that there needs to be care for “unhappy paths” when end-users do things no one expected. Provide oversight and review as early as possible in the SDLC, and teach the whole product team that QA involvement pays off.

Communicating through meetings

Does QA meet with Development to pass on what you have found? There were reasons to communicate that way in the past, but now, in 2022, you need better channels.

QA typically generates collections of findings; it might be called a “punch list,” “bug report,” “ticket collection,” and so on. It is crucial to communicate findings from QA to Development as soon as possible —but not through meetings.

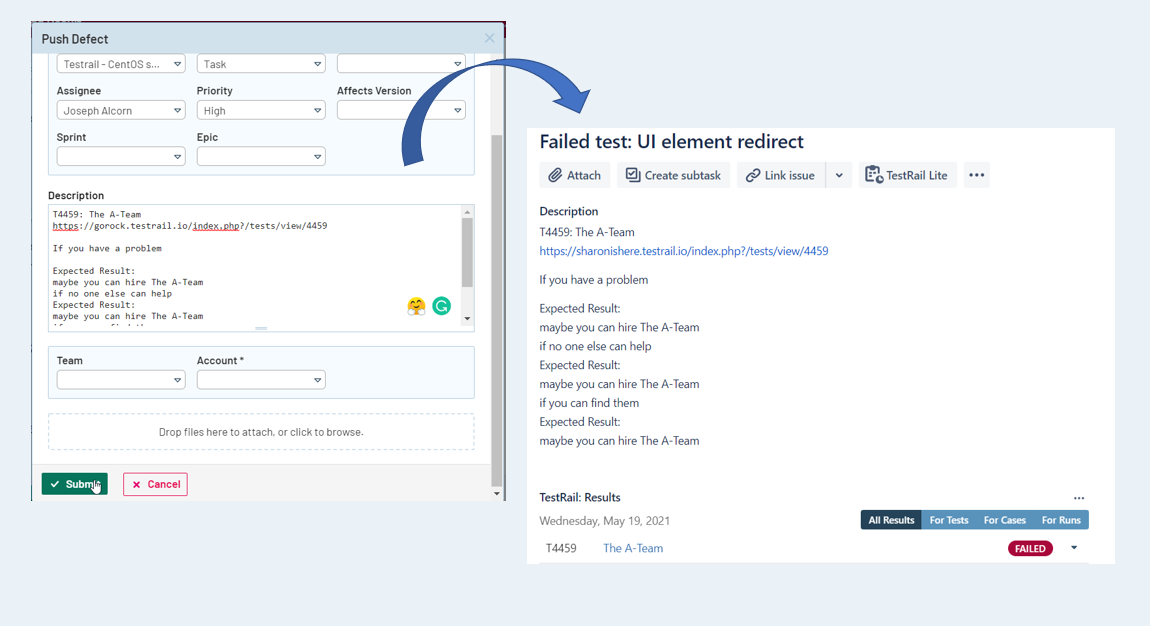

You probably already have a bug or issue manager. Testers should enter items there as soon as they find them. Communication doesn’t improve by waiting for a weekly meeting; start the communication immediately upon identifying an issue. Testers need to learn how to write reports so that issues are reproducible. Better yet, use a test management tool that automatically generates bug reports based on test result data and your comments.

The skill of writing reproducible reports is enormously liberating to testers: possession of that skill means that, once bug reports are entered, they can walk away from them and fully turn their attention to the following tasks. There’s no longer a need to remember what to say for several days until the Bug Meeting; everything that needs to be said fits in the reproducible report.

Communication through an issue manager also benefits developers in multiple ways. It spreads the cognitive load through the week, provides opportunities to research bugs in appropriate depth, and gives them the chance to bring the right talent to bear on specific items.

Meetings are great for discussing difficulties, sharing perspectives, piecing partial understandings into more complete plans, and establishing accountability. For routine communication of technical findings, though, we can do a lot better.

Change the traffic jam of “bug report” meetings to a smooth flow throughout the week of writing, professional-grade item reports from the testers who find them to the developers who resolve them.

Making product decisions

Have you ever been asked whether a product is “good enough to ship”? As a leader in your QA department, that question has an easy answer that should never change: “that’s not my decision.”

“Go-no-go” is the domain of product management. As with product requirements, you only help the organization by clarifying boundaries, interfaces, and responsibilities. QA is in the business of discovering how well the software meets defined requirements; decisions about what goes to customers are quite a different domain.

You’re welcome to help with product management if you choose. You might have personal opinions about how customers will receive particular releases. Make a clear distinction and protect the integrity of QA’s core activities. QA must ensure that most of its energy goes to analyzing requirements and reporting any failure to meet those requirements.

Getting crunched every sprint

Be suspicious of synchronization problems. Do you have a four-week sprint, where your testers are idle the first ten days, then pull all-nighters during the last days of the sprint? That’s a sure symptom of more severe difficulties. Change how your SDLC works so that the load spreads out and so that testers can test productively at all stages of a sprint. Near release, of course, QA tests for traditional bugs. Earlier, though, there’s likely to be plenty to do like:

- testing requirements themselves,

- confirming that the test environment has adequate hardware capacity for the loads it will need to handle

- working with developers to get continuous testing (CT) in place

- running retrospectives on previous QA cycles

- and so on

More generally, any time one group waits on another represents an opportunity to re-think dependencies and inventories in workflows and perhaps smooth out the ebbs and flows. An issue manager largely solves the “bug meeting” problem; smart use of better “data structures” can solve many other issues of excessive synchrony. A good Ticket or Incident or Item Manager, for instance, should have robust enough reporting that managers can find the status of a bug of interest without requiring a specialist to recite a narrative about it. That’s one fewer conversation or meeting to schedule.

Here’s an example: QA testers can start reading requirements as soon as approved. Rather than waiting until the implementation is ready, review the written requirements in week one of a sprint. Identify any ambiguities, and arrange for any special environmental configurations. That moves some of the effort otherwise required in week six, a month earlier. It spreads the load on QA smarter and helps improve cooperation with other departments.

Big bangs of any kind

Be wary of drama. Construct QA activities so day-to-day or sprint-to-sprint changes are primarily small. Help establish good routines that work across many cycles, rather than counting on heroic efforts or special events. Win the races you run through “slow and steady” efforts. One of the most valuable tips you can pass on as a mentor to more junior colleagues is cutting big assignments into smaller pieces and ensuring progress on daily and even hourly.

Tell your team and your peers that you will take the actions above. Tell them when you register successes with these actions and when you learn of adjustments you’ll need to make. QA can elevate quality and manage risk throughout the SDLC; with the right tactical choices, starting with those above, QA can score an outstanding record of living up to that potential. Follow through on the actions and responses with strategic intent, and you’ll create a QA department that achieves more than others realize is possible.